Resources

Feed your brain. Power your campaigns.

Inspire smarter thinking. Accelerate your success.

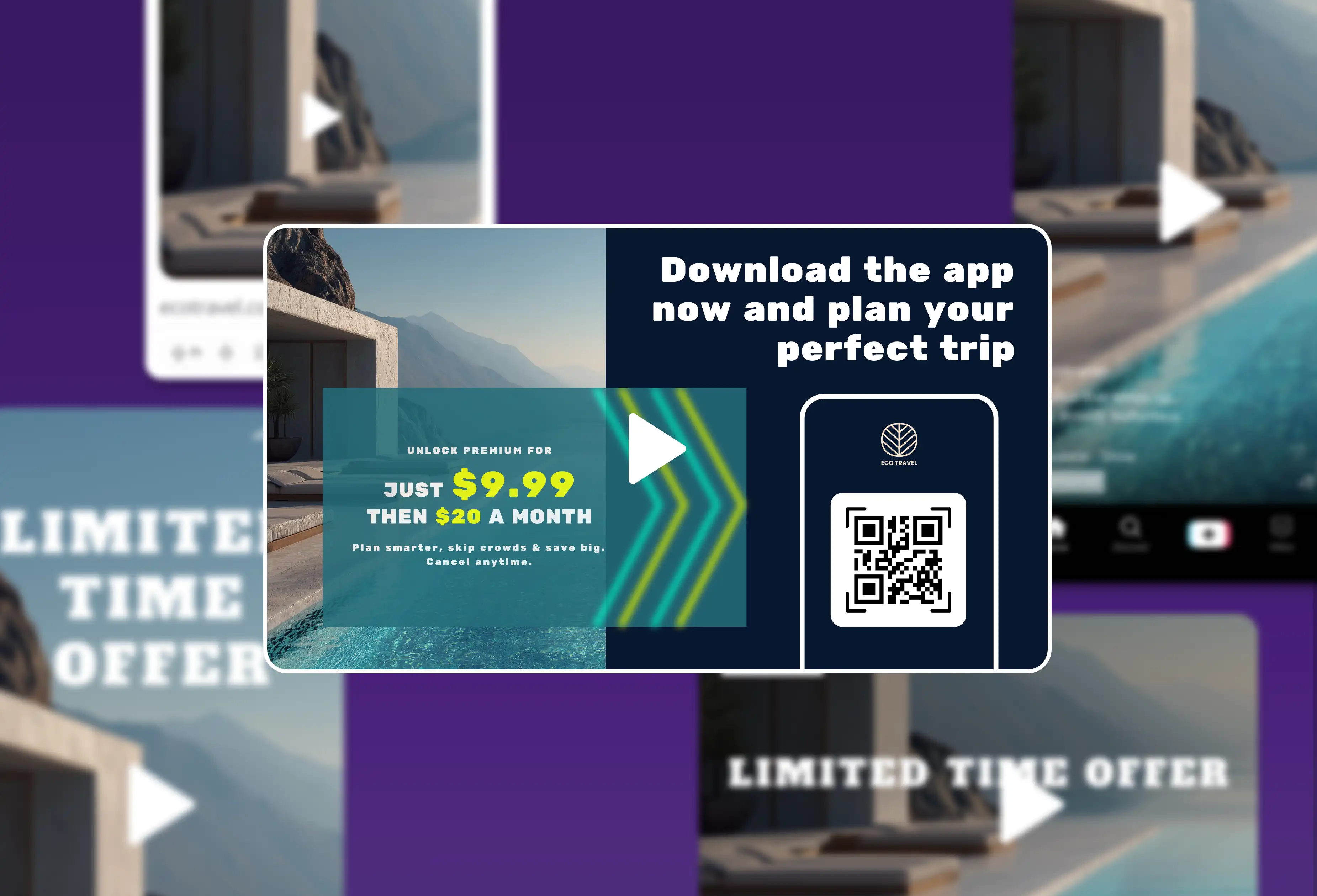

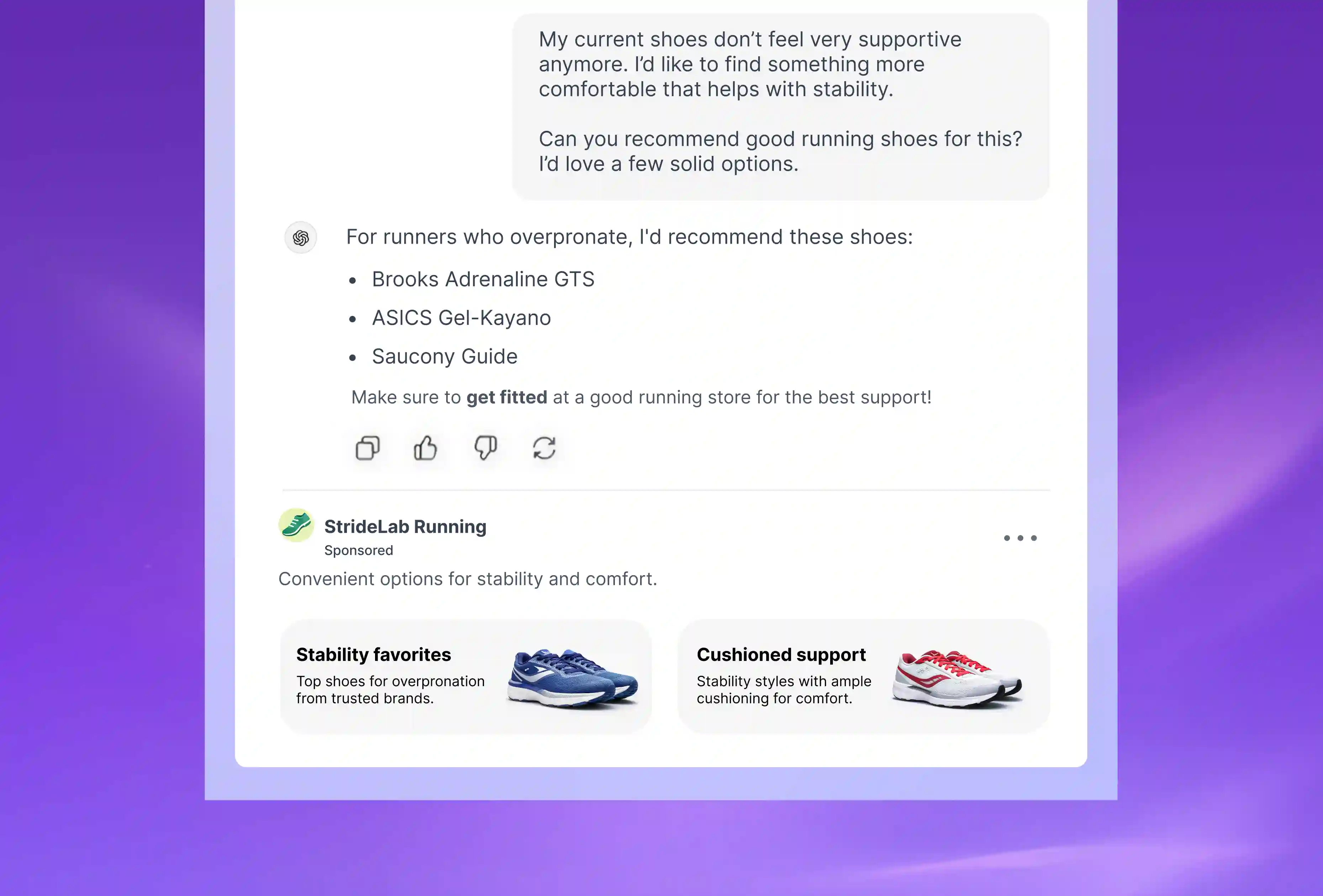

Get all the details and inspiration you can handle on using Smartly to power better ads for your brand.

.svg)

Meet us. Read about us.

Honestly, we'd rather just show you.

Chat with our team to see how Smartly transforms the fragmented advertising ecosystem into something suspiciously manageable.

.svg)

.svg)