25 Petabytes Later — Update on Our Image Rendering Architecture

It’s been more than two years since my previous post on Smartly.io’s image processing architecture. Since 2016 our outgoing image traffic has multiplied several times over, as has the size of our infrastructure. Currently, we’re pushing out bits at a constant rate of 3-8Gbps with spikes surpassing 18Gbps. In our record month so far, we had 2.7PB outgoing image traffic. Yes, that’s petabytes. The number of servers powering the system has grown almost tenfold to several hundred machines.

In fact, our outgoing image traffic has grown so much that once we even broke the Internet. Well, at least in a very local way: We completely saturated the backbone peering link between our hosting provider Hetzner and Facebook. We had to scale down while they added more bandwidth.

Outgoing traffic over 30 days

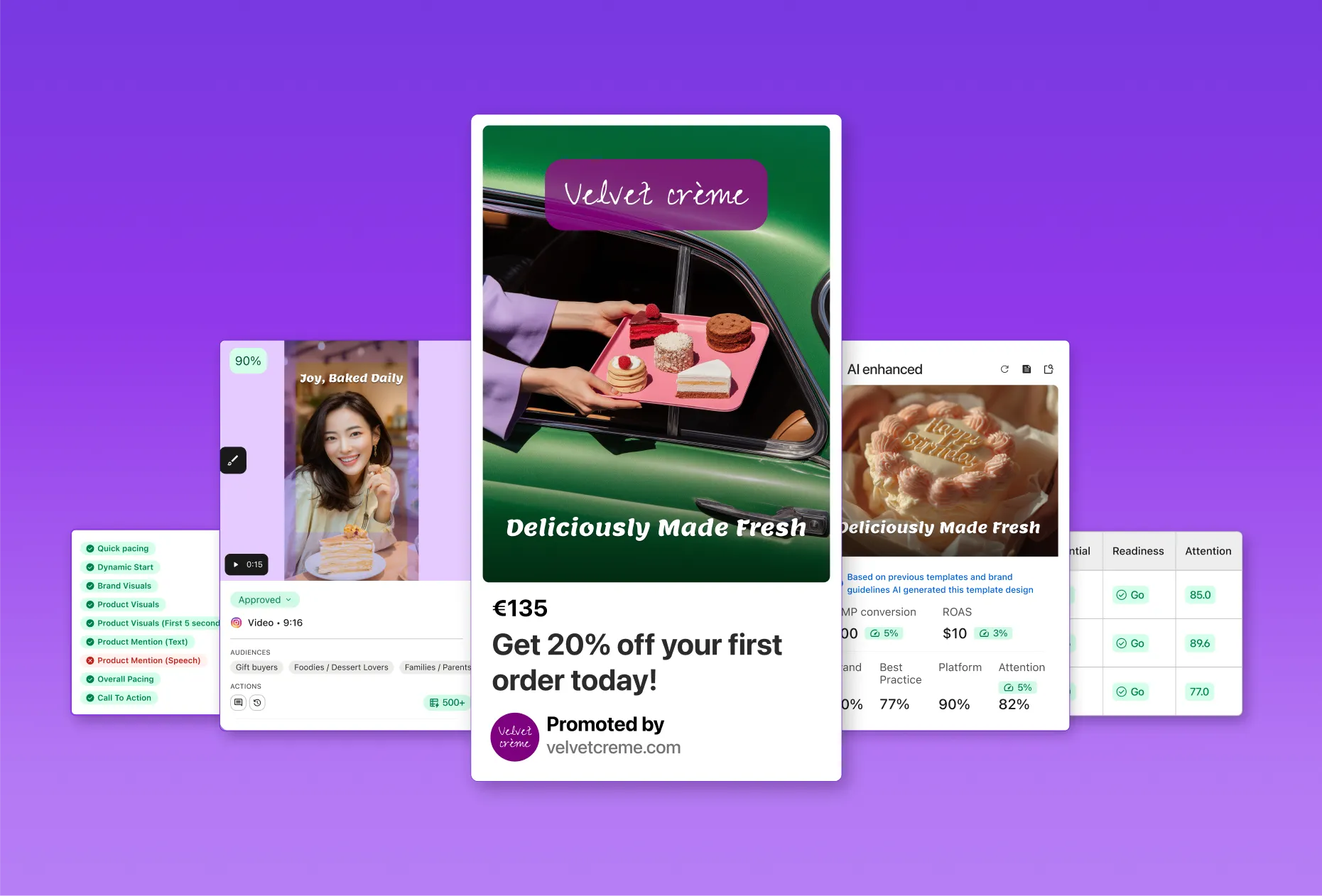

As a quick reminder, our image processing system powers a feature called Dynamic Image Templates. Advertisers have product catalogs (or feeds) that may contain millions of products, and they need to be able to create ads for all of those products in a scalable manner without manual manipulation of each product image. The product catalogs include information such as product names, prices and image URLs.

Our tool allows advertisers to combine this data to produce compelling ad images automatically. Often the original product images also need to be resized or cropped. Image templates can do that too, along with many advanced operations like conditionally adding a sale price ribbon only for products that are on sale. An image for a product is rendered on-demand when a URL that contains the image template ID and product specific data is requested. That is, our rendering capacity must be at a level that can match the number of requests that Facebook and others can throw at us.

Image template editor in action

Coping with increasing load — throttling, caching & failover

The key building blocks are still the same as in 2016. Software such as HAProxy, Varnish, nginx and Squid are in heavy use. We have done a lot of tuning though. There are tons of moving parts, and things can go wrong in so many ways. For example, misconfigured image templates, unresponsive customer servers or surprisingly CPU intensive image manipulation operations have caused a plethora of interesting situations. Such problems can cause cascading performance issues that affect many customers. To mitigate them, we have, for example, implemented application level countermeasures, changed our load balancer failover mechanism and improved visibility to what goes on in the system.

Redis for local coordination and caching

In order to keep track of many variables across worker processes, we have implemented several mechanisms related to throttling and caching. For this purpose, each of the worker nodes runs a local Redis instance. Data is shared between the processes inside one instance but not across nodes, which helps us avoid external dependencies, keeping things simple and nimble.

Throttling

We have come up with several ways to throttle rendering to combat many customer or image template specific issues. We throttle all image templates belonging to a specific customer if the original image downloads take too long or if image rendering takes too long. Whenever an operation takes too much time, we simply increment a counter that corresponds to the current minute and that specific customer. If the count for the past few minutes is too high, we refuse to serve more traffic for the customer until the counter falls below the threshold.

We also try to ensure fairness between customers during high load, so there’s a limit on how many ongoing rendering tasks one customer can have at a time. Thread safety is important for such a limiter as we’re dealing with concurrent operations. For example, consider this naïve pseudocode script where GET and INCR are the corresponding Redis commands:

If you ran it on the application side, there would be no guarantee of atomicity. Someone else could be running it simultaneously, and the counter could be incremented twice, going above the task limit. Luckily Redis allows running Lua scripts on the server side using EVAL. They’re guaranteed to be atomic — no other scripts or commands can run at the same time. Our task limiter is a bit more complicated and it can also handle things like expiring ongoing tasks in case the process that should decrement the counter dies.

The Lua script that gets called before a task gets to start looks like this:

The arguments explained:

- KEYS[1] - Redis list that holds the start times of all currently running tasks

- KEYS[2] - Redis list that holds the start times of all currently running tasks related to this specific customer

- ARGV[1] - Global hard limit - do not process more than this number of tasks concurrently

- ARGV[2] - Throttling start limit

- ARGV[3] - Task group aka per-customer limit - if the number of all currently running tasks exceeds the throttling start limit, don’t allow more than this number of tasks for any individual customer

- ARGV[4] - current time as a Unix timestamp

Whenever a task (e.g. an image rendering request) is about to start, the worker will run this script. If it returns 0, the worker will wait and try again later until a timeout is exceeded or the script returns 1. In case the task gets to start, the worker will call LREM to remove its timestamp from both lists when it finishes. Additionally, whenever a worker has to wait for its turn, it will check if the first element of each list is an expired timestamp. That helps ensure that if a worker crashed during a task and wasn’t able to remove itself from the lists, the slot will be freed up and another worker can start a task.

This homegrown algorithm could certainly be tuned much further, but so far it has performed very well and we haven’t had to bother our data scientists. The usage patterns of the service are such that in most cases, there’s only one, or maximum a handful of customers whose traffic spikes at any one time.

Product image cache index

We also use the local Redis to cache the original product images we download from customers’ servers. As mentioned in the previous post, we have caching Squid proxies in between the image processors and the Internet, but we also use the local disk on the image processors to cache the images. Even with fast networking, local SSD is still orders of magnitude faster, so leveraging the disks available on the nodes makes a lot of sense.

We used to clean up the disk cache using scripts based on the find command that would just remove the oldest files when disk space was about to run out. With hundreds of gigabytes of data, find is unfortunately very slow even on SSD disks and affects system performance, so we had to look for alternatives. Somewhat to our surprise, we were unable to find any good existing solutions for holding temporary files and deleting them when disk is about to run out.

To get rid of find, we decided to implement a secondary index in Redis using sorted sets. Whenever our system downloads an image, it adds the filename to the sorted set with the current unix timestamp as its score. Another process monitors disk usage, and whenever it gets low enough, the process starts reading the sorted set, lowest score first, and deletes files accordingly. This solution has worked extremely well and required very few changes to how we handle the files.

Because none of the uses we have for Redis require data persistence, we have disabled disk writes entirely so that Redis works in-memory only to prevent disk writes from affecting performance. For the cache index, this requires the cleanup script to go through all the files on disk once when the system has been rebooted. It takes some time, but we’ve decided it’s an acceptable cost.

Database access

One significant change to the architecture is that the image rendering servers no longer have direct access to databases for fetching image template data. Instead, there’s a caching proxy API in between. This allows us to do more centralized caching in addition to caching the image templates on each image processor. It also reduces the database servers’ load by reducing the number of clients they have to serve. On the software architecture side, this has allowed us to move the image processing code out of a monolithic codebase.

Load balancer failover

Previously we were using our hosting provider’s capabilities for failover in case a load balancer failed. It’s possible to route the IP address of a failed host to another one by using their API. In the end, this proved not to be the most effective way. There are two major problems with the approach:

- When a load balancer went down, all of its traffic went to one other load balancer. In times of heavy load, this could overwhelm the other load balancer and cause cascading effects as its traffic doubled.

- We had to maintain a fairly complex piece of software that handled the monitoring and failover procedure.

To solve these issues, we migrated our DNS management to Constellix and took its DNS based failover into use. Now, when a load balancer goes down, it just gets removed from the DNS pool and traffic gets divided among other load balancers evenly. We have been pleased with the results, and, as we all know, killing a difficult-to-maintain service like our old failover monitor is always heartwarming. DNS based load balancing has its downsides as well, such as delay in failover, but with a short enough time-to-live in the DNS records, it’s an acceptable trade-off for us.

Logging and Metrics

Being able to see how things are doing is very important for any system, let alone one of this scale. For logging, we use the ELK Stack, and for metrics, we use the somewhat similar stack sometimes labeled as TIG, consisting of Telegraf, InfluxDB and Grafana. Both serve similar purposes and have some overlap. In Kibana we can drill down to individual requests and see the exact parameters related to them, and in Grafana we can see broader trends and also metrics from the services that aren’t in-house code, such as HAProxy and Squid.

A sampling of successful requests in Kibana. Typically most traffic comes from a handful of customers at a time, depending on their catalog, advertisement campaign, and image template update schedules.

One major difference between the two systems is that in InfluxDB we can easily store some data for every request and keep it for a long time because time-series data can be stored in a very compact form. On the Elasticsearch side, each log entry is quite large as it contains lots of details. Due to this, we have opted only to log a small percentage of successful requests. All failures are logged until a per-customer threshold—another thing we keep track of in Redis—is exceeded, and only then do we start dropping log entries.

A sampling of failed requests in Kibana. Most of them are HTTP 400 which in this case is due to misconfigurations in the image templates of one customer. The other significant status is 429 which is returned when the load is too high, and we limit the number of simultaneous requests for one or more customers.

Even so, a typical daily index is a couple of hundred gigabytes in size, which is why we don’t want to store them forever. Therefore the nature of the ELK stack is more about inspecting fairly recent data, meaning up to some months old, while in Grafana, we can see longer-term trends as the data retention can be longer. Combined, the systems provide us with an excellent set of tools to figure out most situations, whether it’s about widespread system issues, misconfigured image templates or something in between.

Future plans - more caching and scaling for peaks

Kill the cephalopod

Squid has served us well, but it has its limitations, especially in our use case with HTTPS URLs. To make sure we only download a specific image URL once in a short time and do collapsed forwarding for HTTPS URLs, Squid needs to act as a man in the middle, decrypting and encrypting the traffic again. It’s a CPU intensive task, and we have learned the hard way that if customers have very large feeds, the load is too much for the Squid servers.

One solution for this would be to set up our own image downloader service and put Varnish caches between it and the machines rendering image templates. It would eliminate issues with HTTPS because encryption between Smartly and external services would be terminated at the downloader and Varnish could cache the result. Confidentiality of the source images isn’t a major concern as they will end up being shown publicly as ads, and when it comes to integrity, in this use case, we feel it’s reasonable to trust our hosting provider’s network.

The downloader service could also perform some tasks that are slow to do on the image processor side that uses ImageMagick for rendering the images. ImageMagick is pretty slow in handling very large images, for example, and there are faster libraries such as libvips. Image templates nearly always have one or more layers consisting of downloaded images. With pre-cache image resizing, we would save ImageMagick rendering time and cache disk space.

Handling traffic peaks

So far we have scaled successfully on bare metal servers. One key drawback is that we can’t immediately react if there’s a large load spike. On the other hand, some traffic peaks can be predicted, such as the days and weeks leading to Black Friday, Singles' Day and Christmas. In those cases, it would make sense to scale up and then back down after the peak has passed.

Peak load situation

We’re currently removing the remaining ties that still exist between our internal production networks and the image processing machines. Once we sort them out, we can consider getting on-demand capacity from elsewhere because it will enable us to set up new machines much faster and in a more automated fashion.

One option would be to use our new Kubernetes cluster, where we could share any idle processing power with other CPU intensive tasks such as Video Templates.

People often ask why we don’t use public cloud solutions. So far the simple reason has been that for this particular use case, we don’t see the value. In AWS, for example, the CloudFront outgoing traffic costs alone would be comparable to the cost of our entire image processing infrastructure, including traffic, at Hetzner. Switching to AWS would bring some nice benefits, but our monthly bill would likely increase two or threefold, which would amount to a substantial sum of money. Nothing is written in stone, however, and things may change as we scale further.

Growing demand poses interesting challenges

A lot has happened since 2016. Scale has multiplied over and over again and even Internet backbone links have creaked at their seams at times. To keep up with the growing demand, we have multiplied the size of our infrastructure. In addition to adding raw processing power and bandwidth, we have come up with several solutions to cope with the load, ranging from Redis based caching and throttling solutions on the application level to changing our load balancer failover mechanism, and a number of things in between. These changes have made significant contributions to Smartly.io’s ability to serve some of the largest online advertisers.

Together with video template rendering, the image rendering system will continue to provide interesting scaling challenges for years to come. We strongly believe that the ability to produce stunning ad images will continue to become more and more important for advertisers. As scale goes up and Smartly.io’s product goes multiplatform (previously we have supported only Facebook and Instagram), and due to the versatility of our templating tools and our customers’ creativity, there will always be unforeseen issues to tackle.

25 Petabytes Later — Update on Our Image Rendering Architecture

It’s been more than two years since my previous post on Smartly.io’s image processing architecture. Since 2016 our outgoing image traffic has multiplied several times over, as has the size of our infrastructure. Currently, we’re pushing out bits at a constant rate of 3-8Gbps with spikes surpassing 18Gbps. In our record month so far, we had 2.7PB outgoing image traffic. Yes, that’s petabytes. The number of servers powering the system has grown almost tenfold to several hundred machines.

In fact, our outgoing image traffic has grown so much that once we even broke the Internet. Well, at least in a very local way: We completely saturated the backbone peering link between our hosting provider Hetzner and Facebook. We had to scale down while they added more bandwidth.

Outgoing traffic over 30 days

As a quick reminder, our image processing system powers a feature called Dynamic Image Templates. Advertisers have product catalogs (or feeds) that may contain millions of products, and they need to be able to create ads for all of those products in a scalable manner without manual manipulation of each product image. The product catalogs include information such as product names, prices and image URLs.

Our tool allows advertisers to combine this data to produce compelling ad images automatically. Often the original product images also need to be resized or cropped. Image templates can do that too, along with many advanced operations like conditionally adding a sale price ribbon only for products that are on sale. An image for a product is rendered on-demand when a URL that contains the image template ID and product specific data is requested. That is, our rendering capacity must be at a level that can match the number of requests that Facebook and others can throw at us.

Image template editor in action

Coping with increasing load — throttling, caching & failover

The key building blocks are still the same as in 2016. Software such as HAProxy, Varnish, nginx and Squid are in heavy use. We have done a lot of tuning though. There are tons of moving parts, and things can go wrong in so many ways. For example, misconfigured image templates, unresponsive customer servers or surprisingly CPU intensive image manipulation operations have caused a plethora of interesting situations. Such problems can cause cascading performance issues that affect many customers. To mitigate them, we have, for example, implemented application level countermeasures, changed our load balancer failover mechanism and improved visibility to what goes on in the system.

Redis for local coordination and caching

In order to keep track of many variables across worker processes, we have implemented several mechanisms related to throttling and caching. For this purpose, each of the worker nodes runs a local Redis instance. Data is shared between the processes inside one instance but not across nodes, which helps us avoid external dependencies, keeping things simple and nimble.

Throttling

We have come up with several ways to throttle rendering to combat many customer or image template specific issues. We throttle all image templates belonging to a specific customer if the original image downloads take too long or if image rendering takes too long. Whenever an operation takes too much time, we simply increment a counter that corresponds to the current minute and that specific customer. If the count for the past few minutes is too high, we refuse to serve more traffic for the customer until the counter falls below the threshold.

We also try to ensure fairness between customers during high load, so there’s a limit on how many ongoing rendering tasks one customer can have at a time. Thread safety is important for such a limiter as we’re dealing with concurrent operations. For example, consider this naïve pseudocode script where GET and INCR are the corresponding Redis commands:

If you ran it on the application side, there would be no guarantee of atomicity. Someone else could be running it simultaneously, and the counter could be incremented twice, going above the task limit. Luckily Redis allows running Lua scripts on the server side using EVAL. They’re guaranteed to be atomic — no other scripts or commands can run at the same time. Our task limiter is a bit more complicated and it can also handle things like expiring ongoing tasks in case the process that should decrement the counter dies.

The Lua script that gets called before a task gets to start looks like this:

The arguments explained:

- KEYS[1] - Redis list that holds the start times of all currently running tasks

- KEYS[2] - Redis list that holds the start times of all currently running tasks related to this specific customer

- ARGV[1] - Global hard limit - do not process more than this number of tasks concurrently

- ARGV[2] - Throttling start limit

- ARGV[3] - Task group aka per-customer limit - if the number of all currently running tasks exceeds the throttling start limit, don’t allow more than this number of tasks for any individual customer

- ARGV[4] - current time as a Unix timestamp

Whenever a task (e.g. an image rendering request) is about to start, the worker will run this script. If it returns 0, the worker will wait and try again later until a timeout is exceeded or the script returns 1. In case the task gets to start, the worker will call LREM to remove its timestamp from both lists when it finishes. Additionally, whenever a worker has to wait for its turn, it will check if the first element of each list is an expired timestamp. That helps ensure that if a worker crashed during a task and wasn’t able to remove itself from the lists, the slot will be freed up and another worker can start a task.

This homegrown algorithm could certainly be tuned much further, but so far it has performed very well and we haven’t had to bother our data scientists. The usage patterns of the service are such that in most cases, there’s only one, or maximum a handful of customers whose traffic spikes at any one time.

Product image cache index

We also use the local Redis to cache the original product images we download from customers’ servers. As mentioned in the previous post, we have caching Squid proxies in between the image processors and the Internet, but we also use the local disk on the image processors to cache the images. Even with fast networking, local SSD is still orders of magnitude faster, so leveraging the disks available on the nodes makes a lot of sense.

We used to clean up the disk cache using scripts based on the find command that would just remove the oldest files when disk space was about to run out. With hundreds of gigabytes of data, find is unfortunately very slow even on SSD disks and affects system performance, so we had to look for alternatives. Somewhat to our surprise, we were unable to find any good existing solutions for holding temporary files and deleting them when disk is about to run out.

To get rid of find, we decided to implement a secondary index in Redis using sorted sets. Whenever our system downloads an image, it adds the filename to the sorted set with the current unix timestamp as its score. Another process monitors disk usage, and whenever it gets low enough, the process starts reading the sorted set, lowest score first, and deletes files accordingly. This solution has worked extremely well and required very few changes to how we handle the files.

Because none of the uses we have for Redis require data persistence, we have disabled disk writes entirely so that Redis works in-memory only to prevent disk writes from affecting performance. For the cache index, this requires the cleanup script to go through all the files on disk once when the system has been rebooted. It takes some time, but we’ve decided it’s an acceptable cost.

Database access

One significant change to the architecture is that the image rendering servers no longer have direct access to databases for fetching image template data. Instead, there’s a caching proxy API in between. This allows us to do more centralized caching in addition to caching the image templates on each image processor. It also reduces the database servers’ load by reducing the number of clients they have to serve. On the software architecture side, this has allowed us to move the image processing code out of a monolithic codebase.

Load balancer failover

Previously we were using our hosting provider’s capabilities for failover in case a load balancer failed. It’s possible to route the IP address of a failed host to another one by using their API. In the end, this proved not to be the most effective way. There are two major problems with the approach:

- When a load balancer went down, all of its traffic went to one other load balancer. In times of heavy load, this could overwhelm the other load balancer and cause cascading effects as its traffic doubled.

- We had to maintain a fairly complex piece of software that handled the monitoring and failover procedure.

To solve these issues, we migrated our DNS management to Constellix and took its DNS based failover into use. Now, when a load balancer goes down, it just gets removed from the DNS pool and traffic gets divided among other load balancers evenly. We have been pleased with the results, and, as we all know, killing a difficult-to-maintain service like our old failover monitor is always heartwarming. DNS based load balancing has its downsides as well, such as delay in failover, but with a short enough time-to-live in the DNS records, it’s an acceptable trade-off for us.

Logging and Metrics

Being able to see how things are doing is very important for any system, let alone one of this scale. For logging, we use the ELK Stack, and for metrics, we use the somewhat similar stack sometimes labeled as TIG, consisting of Telegraf, InfluxDB and Grafana. Both serve similar purposes and have some overlap. In Kibana we can drill down to individual requests and see the exact parameters related to them, and in Grafana we can see broader trends and also metrics from the services that aren’t in-house code, such as HAProxy and Squid.

A sampling of successful requests in Kibana. Typically most traffic comes from a handful of customers at a time, depending on their catalog, advertisement campaign, and image template update schedules.

One major difference between the two systems is that in InfluxDB we can easily store some data for every request and keep it for a long time because time-series data can be stored in a very compact form. On the Elasticsearch side, each log entry is quite large as it contains lots of details. Due to this, we have opted only to log a small percentage of successful requests. All failures are logged until a per-customer threshold—another thing we keep track of in Redis—is exceeded, and only then do we start dropping log entries.

A sampling of failed requests in Kibana. Most of them are HTTP 400 which in this case is due to misconfigurations in the image templates of one customer. The other significant status is 429 which is returned when the load is too high, and we limit the number of simultaneous requests for one or more customers.

Even so, a typical daily index is a couple of hundred gigabytes in size, which is why we don’t want to store them forever. Therefore the nature of the ELK stack is more about inspecting fairly recent data, meaning up to some months old, while in Grafana, we can see longer-term trends as the data retention can be longer. Combined, the systems provide us with an excellent set of tools to figure out most situations, whether it’s about widespread system issues, misconfigured image templates or something in between.

Future plans - more caching and scaling for peaks

Kill the cephalopod

Squid has served us well, but it has its limitations, especially in our use case with HTTPS URLs. To make sure we only download a specific image URL once in a short time and do collapsed forwarding for HTTPS URLs, Squid needs to act as a man in the middle, decrypting and encrypting the traffic again. It’s a CPU intensive task, and we have learned the hard way that if customers have very large feeds, the load is too much for the Squid servers.

One solution for this would be to set up our own image downloader service and put Varnish caches between it and the machines rendering image templates. It would eliminate issues with HTTPS because encryption between Smartly and external services would be terminated at the downloader and Varnish could cache the result. Confidentiality of the source images isn’t a major concern as they will end up being shown publicly as ads, and when it comes to integrity, in this use case, we feel it’s reasonable to trust our hosting provider’s network.

The downloader service could also perform some tasks that are slow to do on the image processor side that uses ImageMagick for rendering the images. ImageMagick is pretty slow in handling very large images, for example, and there are faster libraries such as libvips. Image templates nearly always have one or more layers consisting of downloaded images. With pre-cache image resizing, we would save ImageMagick rendering time and cache disk space.

Handling traffic peaks

So far we have scaled successfully on bare metal servers. One key drawback is that we can’t immediately react if there’s a large load spike. On the other hand, some traffic peaks can be predicted, such as the days and weeks leading to Black Friday, Singles' Day and Christmas. In those cases, it would make sense to scale up and then back down after the peak has passed.

Peak load situation

We’re currently removing the remaining ties that still exist between our internal production networks and the image processing machines. Once we sort them out, we can consider getting on-demand capacity from elsewhere because it will enable us to set up new machines much faster and in a more automated fashion.

One option would be to use our new Kubernetes cluster, where we could share any idle processing power with other CPU intensive tasks such as Video Templates.

People often ask why we don’t use public cloud solutions. So far the simple reason has been that for this particular use case, we don’t see the value. In AWS, for example, the CloudFront outgoing traffic costs alone would be comparable to the cost of our entire image processing infrastructure, including traffic, at Hetzner. Switching to AWS would bring some nice benefits, but our monthly bill would likely increase two or threefold, which would amount to a substantial sum of money. Nothing is written in stone, however, and things may change as we scale further.

Growing demand poses interesting challenges

A lot has happened since 2016. Scale has multiplied over and over again and even Internet backbone links have creaked at their seams at times. To keep up with the growing demand, we have multiplied the size of our infrastructure. In addition to adding raw processing power and bandwidth, we have come up with several solutions to cope with the load, ranging from Redis based caching and throttling solutions on the application level to changing our load balancer failover mechanism, and a number of things in between. These changes have made significant contributions to Smartly.io’s ability to serve some of the largest online advertisers.

Together with video template rendering, the image rendering system will continue to provide interesting scaling challenges for years to come. We strongly believe that the ability to produce stunning ad images will continue to become more and more important for advertisers. As scale goes up and Smartly.io’s product goes multiplatform (previously we have supported only Facebook and Instagram), and due to the versatility of our templating tools and our customers’ creativity, there will always be unforeseen issues to tackle.

Honestly, we'd rather just show you.

Chat with our team to see how Smartly transforms the fragmented advertising ecosystem into something suspiciously manageable.

%201%20(1)%201%201.avif)